In 2026, the educational sector faces a massive scaling challenge. Global student enrollment in higher education will likely reach 263 million by the end of this year. Traditional grading methods cannot keep up with this volume. Manual feedback is slow and often inconsistent. This delay hinders student progress.

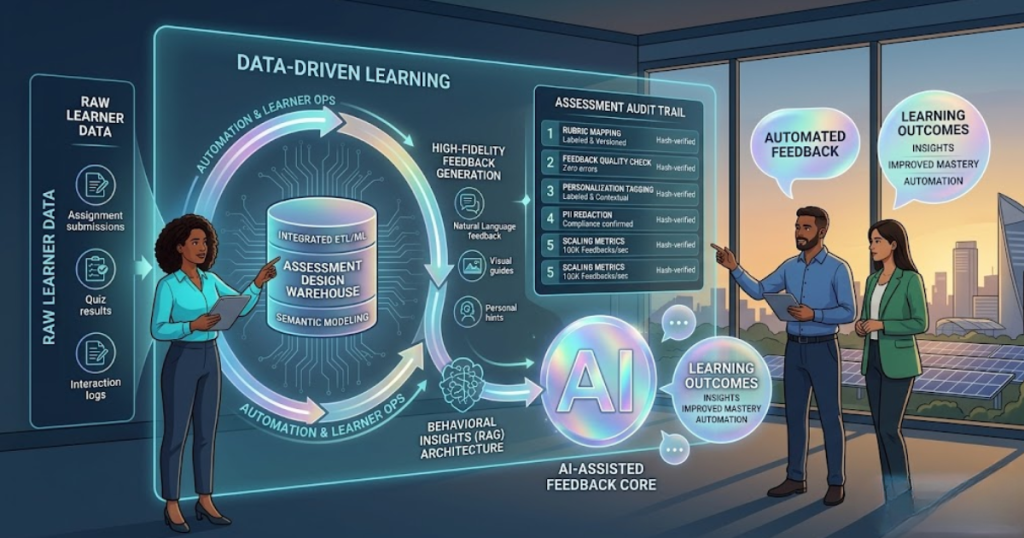

Education Data Analytics provides the technical framework to solve this. By using automated assessment design, institutions can provide high-fidelity feedback instantly. This shift moves beyond simple multiple-choice questions. It involves complex natural language processing and behavioral tracking. Professional Education Data Analytics Services now help schools build these systems to ensure every student receives personal guidance at scale.

The Technical Need for High-Fidelity Feedback

High-fidelity feedback is specific, actionable, and timely. In a large university, a professor might take two weeks to grade an essay. By then, the student has moved to a new topic. The feedback loses its impact.

The Role of Latency in Learning

Educational psychology shows that feedback is most effective when it occurs within minutes of the task.

- Immediate Feedback: Improves retention by up to 40%.

- Delayed Feedback: Often leads to the “Reification of Error,” where students memorize incorrect concepts.

Automated systems eliminate this latency. They use Education Data Analytics to analyze student inputs against a rubric in real-time. This allows for an iterative learning process where students correct mistakes immediately.

Engineering the Automated Assessment Pipeline

Building an automated assessment system requires a sophisticated data pipeline. This pipeline must handle unstructured data like text, code, and even voice.

1. Natural Language Processing (NLP) for Essays

Modern assessments use NLP to evaluate open-ended responses. These systems do not just look for keywords. They analyze “Semantic Coherence” and “Argument Strength.”

- Transformer Models: Large Language Models (LLMs) act as the scoring engine.

- Vector Embeddings: The system converts student essays into mathematical vectors. It then compares these to “Gold Standard” responses from subject matter experts.

2. Programmatic Assessment for STEM

In computer science and math, Education Data Analytics Services implement “Unit Testing” for student work.

- Static Analysis: Checks code for syntax and style.

- Dynamic Analysis: Runs the student’s code against multiple test cases to verify logic.

- Plagiarism Detection: Uses “MOSS” (Measure of Software Similarity) algorithms to ensure original work.

3. Psychometric Calibration

A test is only as good as its questions. Data analytics allow for “Item Response Theory” (IRT) modeling. This tracks how difficult each question is. If 90% of top-performing students miss a specific question, the system flags the question as “Biased” or “Flawed.”

Scaling Feedback via Education Data Analytics

Scaling is the primary benefit of automation. A single system can provide feedback to 100,000 students simultaneously. This is critical for Massive Open Online Courses (MOOCs) and global certification programs.

1. Multi-Modal Feedback Loops

In 2026, feedback is not just text. It is multi-modal.

- Visual Heatmaps: Shows students exactly where in a diagram they made an error.

- Audio Summaries: Generative AI creates 30-second audio clips explaining complex mistakes.

- Adaptive Hints: Instead of giving the answer, the system provides a “Scaffolded Hint” based on the student’s previous errors.

2. Quantitative Impact on Learning Outcomes

Recent data from Education Data Analytics implementations shows significant gains.

- Pass Rates: Online courses using automated feedback see a 25% increase in completion rates.

- Engagement: Students interact with automated feedback systems 3x more often than with traditional rubrics.

- Teacher Capacity: Automation reduces manual grading time by 70%, allowing teachers to focus on “At-Risk” students.

Strategy: Implementing Education Data Analytics Services

Institutions must take a structured approach to deploying these services. A “DIY” approach often leads to data silos and security risks.

1. Data Integration and Interoperability

Most schools use multiple platforms like Canvas, Blackboard, and Moodle. Education Data Analytics Services focus on “LTI” (Learning Tools Interoperability) standards. This ensures that the assessment engine can read data from any source.

- Unified Student Profiles: All assessment data flows into a single “Data Lake.”

- Real-Time Dashboards: Teachers see which students are struggling across all subjects in one view.

2. Establishing Clear Rubrics

Automation requires a “Logical Rubric.” If a human cannot explain the grading criteria clearly, the AI cannot either. Consultants help educators translate “Intuitive Grading” into “Machine-Readable Rules.” This process often improves the quality of the assessment itself.

Challenges: Accuracy, Bias, and Ethics

No technical system is perfect. Automated assessments face three major challenges in 2026.

1. The “Hallucination” Risk

LLMs can sometimes give confident but incorrect feedback. This is why professional Education Data Analytics systems use “Retrieval-Augmented Generation” (RAG).

- Fact-Checking: The system checks the AI’s feedback against a verified textbook before showing it to the student.

- Human-in-the-Loop: High-stakes assessments (like final exams) still require a human “Spot-Check.”

2. Algorithmic Bias

If the training data for an AI is biased, the grading will be biased. For example, an AI might struggle with students who have strong regional accents or non-standard dialects.

- Bias Audits: Specialized services perform “Fairness Testing” on every model.

- Diverse Datasets: Using data from global student populations helps the AI understand different writing styles.

3. Data Privacy and FERPA

Student data is highly sensitive. In the US, the Family Educational Rights and Privacy Act (FERPA) sets strict rules.

- Anonymization: Names and IDs are removed before data is sent to an AI model for analysis.

- Local Hosting: Many universities now run “Private AI” on their own servers to keep data within their walls.

ROI of Automated Assessment Design

Investing in Education Data Analytics Services provides a clear financial and academic return.

| Investment Area | Manual Process Cost | Automated Process Cost |

| Grading Labor | $50 – $100 per student | < $1 per student |

| Feedback Latency | 3 – 10 Days | < 10 Seconds |

| Course Scaling | Capped by staff size | Unlimited |

| Error Rate | 15% (Human Fatigue) | < 2% (Calibrated AI) |

Case Study: Large State University

A university in the Midwest faced a 20% budget cut while enrollment grew. They implemented an automated assessment system for their “Introduction to Psychology” course.

- The Setup: 5,000 students per semester.

- The Result: The university saved $120,000 in Teaching Assistant (TA) costs in the first year.

- The Academic Result: Average final exam scores rose by 8% because students used the “Instant Feedback” to study more effectively.

The Future: Agentic Learning Environments

By late 2026, we are seeing the rise of “Agentic Learning.” This goes beyond simple assessment.

- Autonomous Tutors: These agents monitor a student’s progress 24/7. They don’t just grade work; they suggest when a student should take a break or review a previous lesson.

- Dynamic Assessments: The test changes while the student is taking it. If a student proves they know a concept, the test skips ahead to more difficult material.

- Emotion Analytics: Using webcams (with consent), AI identifies “Frustration” or “Boredom” through facial expressions. The system then adjusts the difficulty level to keep the student in the “Flow State.”

Technical Roadmap for Adoption

If you are looking to scale your feedback through Education Data Analytics, follow this technical roadmap:

- Audit Your Data: Ensure your Student Information System (SIS) can export clean, structured data.

- Pilot Small: Start with a single department like Math or Coding where logic is objective.

- Select a Framework: Choose between “Proprietary AI” or “Open-Source Models” based on your budget and privacy needs.

- Train Your Staff: Help teachers move from “Graders” to “Data-Informed Mentors.”

- Monitor for Bias: Regularly check that your automated feedback is fair to all student groups.

Conclusion

Automated assessment design is the only way to meet the global demand for high-quality education. Education Data Analytics transforms the grading process from a slow, manual chore into a fast, data-driven engine for success. By using professional Education Data Analytics Services, institutions can ensure their feedback is accurate, fair, and scalable.

In 2026, the goal is “High-Fidelity Feedback for All.” This is no longer a futuristic dream. It is a technical reality. As we automate the routine tasks of education, we allow teachers to focus on what matters most: the human connection. The future of learning is personalized, immediate, and powered by data. Investing in these systems today ensures that your institution remains competitive and effective in an increasingly digital world.